Table of contents

- Introduction

- High-Level Architecture Overview

- Deep Dive into Qwen-Audio: A Symphony of Sound and Language

- The Visual Frontier: Qwen-VL's Pioneering Vision

- OpenSearch (LLM-Based Conversational Search Edition): One-Stop Multimodal SAAS RAG

- Qwen-Agent: The Architect of Intelligent Interaction

- Model Studio: The GenAI Powerhouse

- The API: Your Multimodal Maestro

- Use Cases: Bringing Multimodal AI to Life

- Conclusion

By Farruh

Introduction

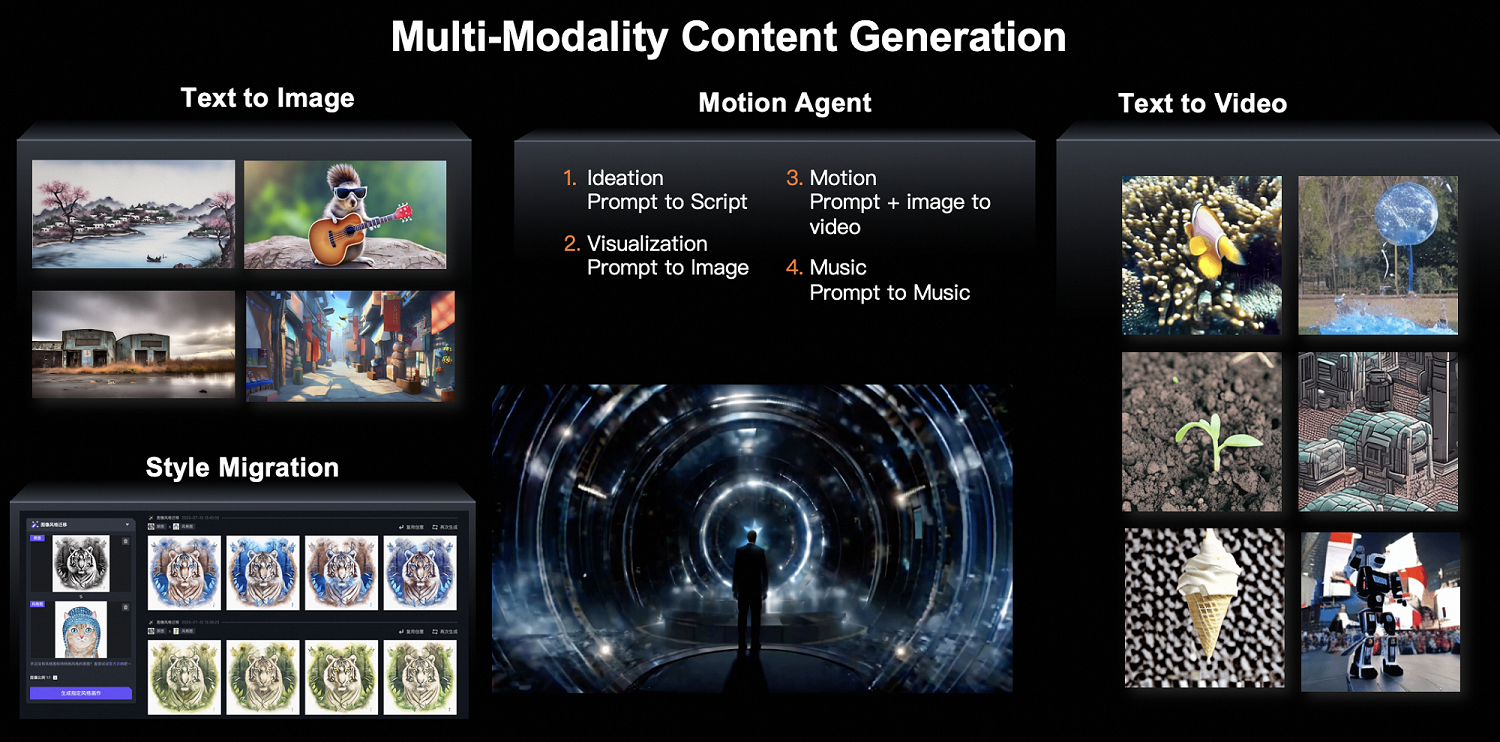

We are on the brink of a new era in artificial intelligence. Multimodal AI, which combines audio, visual, and textual data, is no longer just a concept but a practical reality. The Qwen Family of Large Language Models (LLMs) is crucial in this development. This blog will be your guide to comprehending and applying multimodal AI with Alibaba Cloud's Model Studio, Qwen-Audio, Qwen-VL, Qwen-Agent, and OpenSearch (LLM-Based Conversational Search Edition).

High-Level Architecture Overview

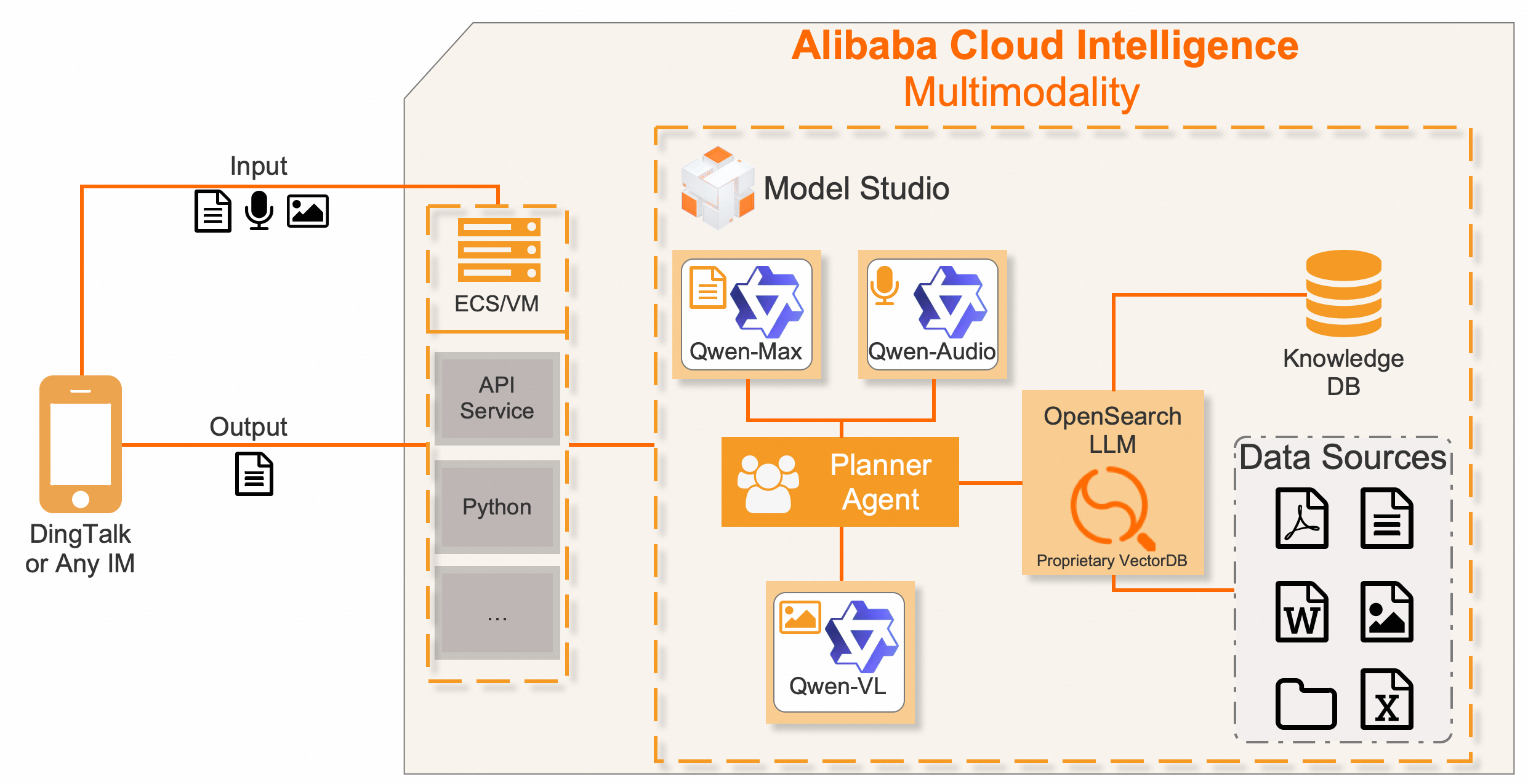

At its core, the multimodal AI we discuss today hinges on the following technological pillars:

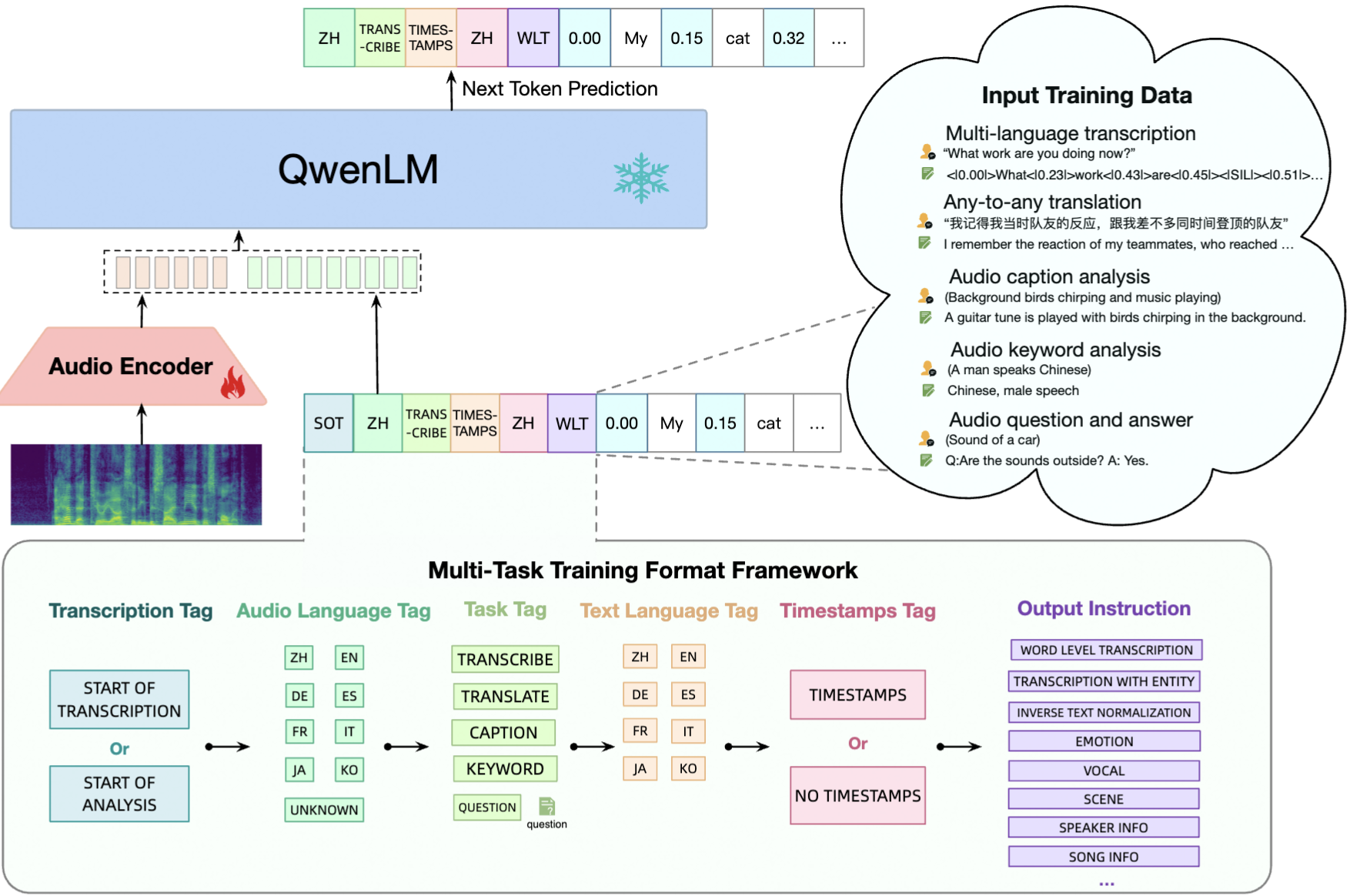

Qwen-Audio: Processes a wide array of audio inputs, converting them into actionable text.

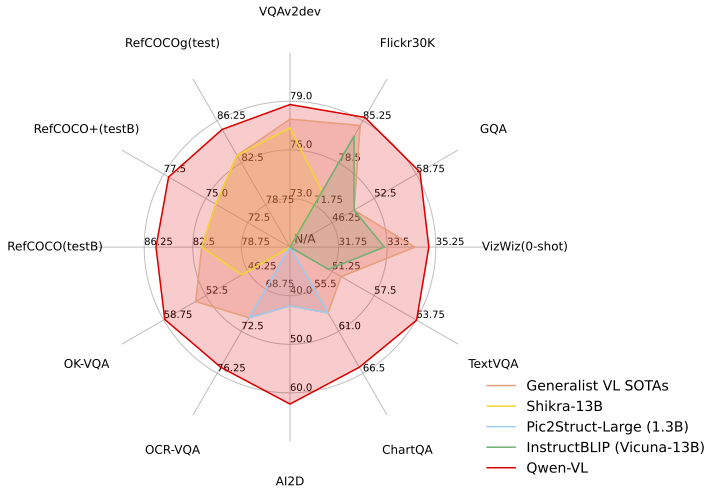

Qwen-VL: Analyzes images with unprecedented precision, revealing nuanced details and text within visuals.

OpenSearch (LLM-Based Conversational Search Edition): Tailors Q&A systems to specific enterprise needs, leveraging vector retrieval and large-scale models.

Qwen-Agent: Orchestrates intelligent agents that follow instructions and execute complex tasks.

Model Studio: The one-stop AI development platform that brings our multimodal ecosystem to life.

We used a planner agent that controls all solutions and the logic between them. The Planner Agent on Model Studio integrates all solutions into one Generative AI pipeline. Above this, with Python, an API will be created, ready for deployment on Alibaba Cloud's Elastic Computing Service (ECS), and connected to DingTalk IM or any other IM platform you choose.

Deep Dive into Qwen-Audio: A Symphony of Sound and Language

Qwen-Audio is not just an audio processing tool — it's an auditory intelligence that speaks the language of sound with unparalleled fluency. It deals with everything from human speech to the subtleties of music, transforming audio to text with remarkable acuity, redefining how we interact with machines using sound as a medium.

The Visual Frontier: Qwen-VL's Pioneering Vision

In the realm of vision, Qwen-VL stands tall with models like Qwen-VL-Plus and Qwen-VL-Max that set new benchmarks in image processing. These models not only match but exceed the capabilities of industry giants, offering an extraordinary level of visual understanding. Whether it's recognizing minute details in a million-pixel image or comprehending complex visual scenes, Qwen-VL is your lens to clarity.

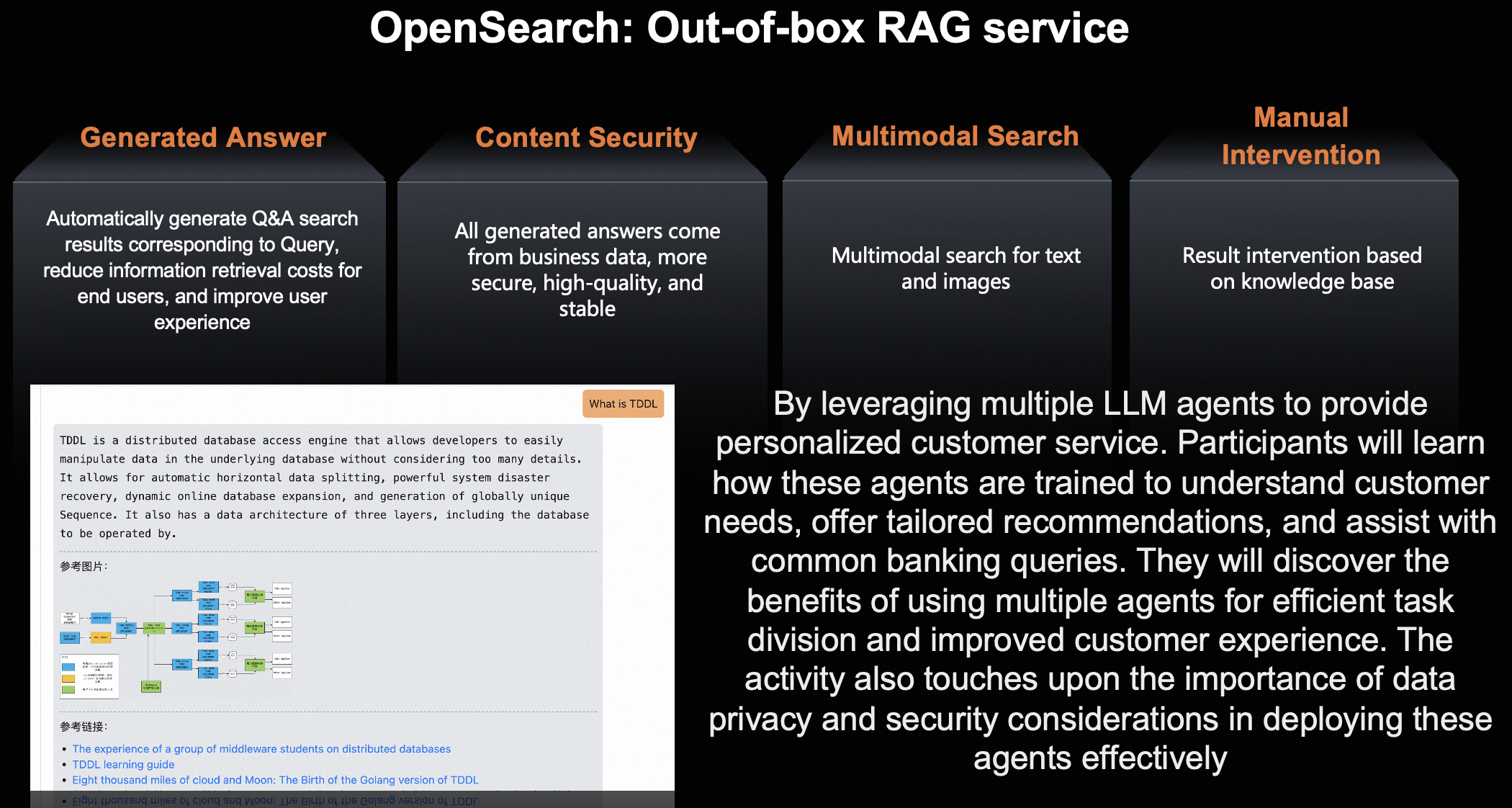

OpenSearch (LLM-Based Conversational Search Edition): One-Stop Multimodal SAAS RAG

OpenSearch (LLM-Based Conversational Search Edition) embodies the quest for precision in a sea of data. It's the beacon that enterprises need to navigate the complexities of industry-specific Q&A systems. The solution is elegant — vectorize your business data, index it, and let OpenSearch find the answers that are as accurate as they are relevant to your enterprise.

Qwen-Agent: The Architect of Intelligent Interaction

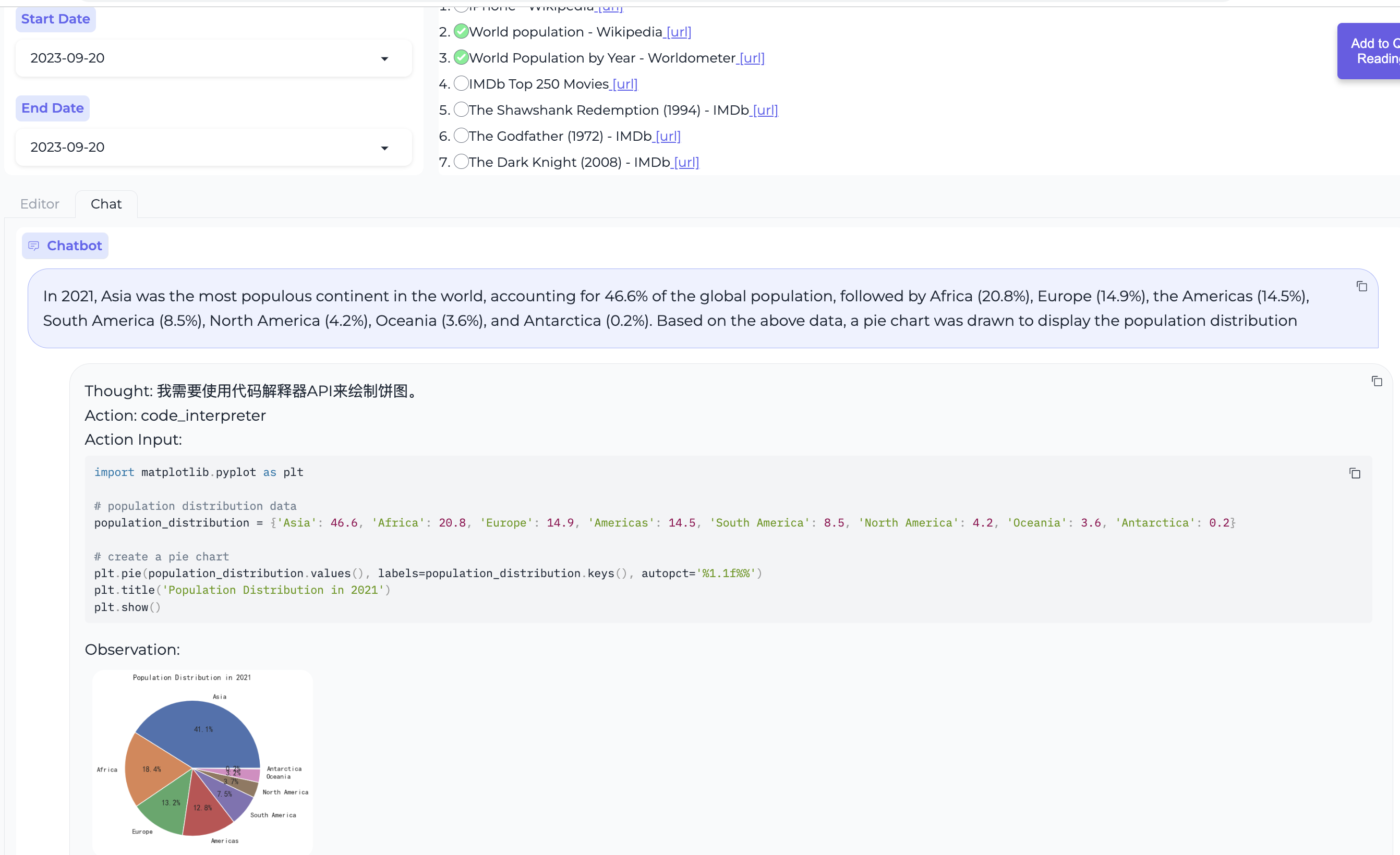

The Qwen-Agent framework is where the building blocks of intelligence are assembled to create something truly special. With it, developers can construct agents that not only understand instructions but can use tools, plan, and remember. It's not just an AI — it's a digital being that can learn and evolve to meet your application's needs.

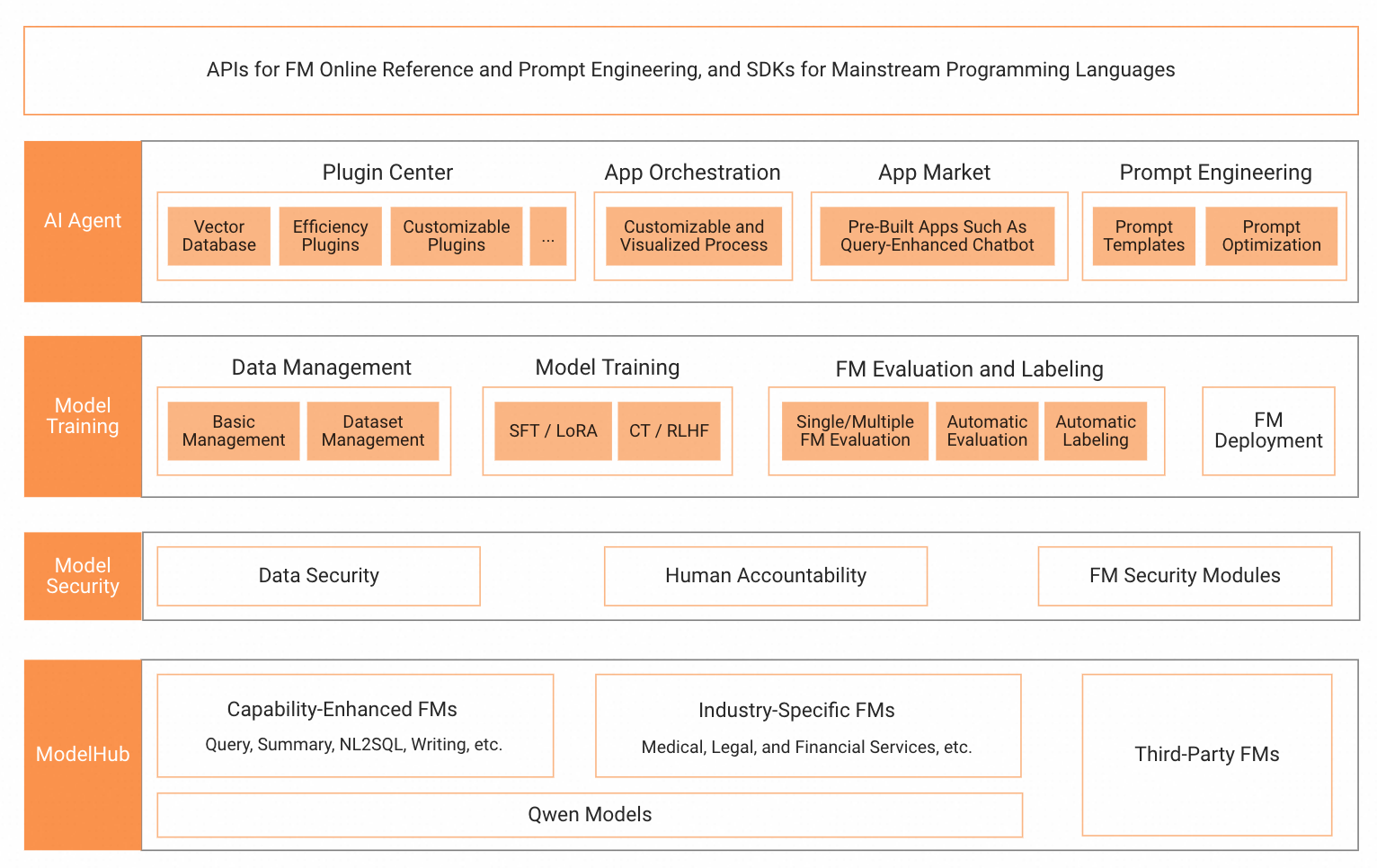

Model Studio: The GenAI Powerhouse

At the heart of this ecosystem lies Model Studio, Alibaba Cloud's generative AI playground. This is where models are not just trained but born, tailored to the unique requirements of each application. It's where the full spectrum of AI — from data management to deployment — comes together in a secure, responsible, and efficient manner.

The API: Your Multimodal Maestro

The final act in our symphony is the creation of a unified API. Using Python and FlaskAPI, we will encapsulate the intelligence of our multimodal models into an accessible, scalable, and robust service. Deployed on ECS, this API will become the bridge that connects your applications to the intelligent orchestration of Qwen LLMs, ready to be engaged via DingTalk IM or any IM service of your preference.

Integrating Qwen Family LLMs with Model Studio overall steps can be described below:

Initial setup and configuration of Model Studio.

Detailed instructions for integrating Qwen-Audio and Qwen-VL with your applications.

Strategies for leveraging OpenSearch for creating intelligent enterprise solutions, link.

Best practices for developing and deploying Qwen-Agent for enhanced AI interactions.

Tips for orchestrating all these components into a single, cohesive API.

Deployment guidelines on Alibaba Cloud ECS and connectivity with DingTalk IM.

Detail step-by-step tutorials where by following you will become adept at creating AI applications that can see, hear, and understand the world in ways that were previously unimaginable.

Use Cases: Bringing Multimodal AI to Life

Multimodal AI isn't a distant dream — it's already unlocking new opportunities across various industries. Here are some real-world applications where the Qwen Family LLMs and Model Studio integration can make a significant impact:

Customer Service Enhancement

Imagine a customer service system that not only understands the text queries but can also interpret the customer's voice through Qwen-Audio. It can analyze input images by using Qwen-VL, providing a more personalized and responsive service experience.

Advanced Healthcare Solutions

In healthcare, multimodal AI can revolutionize patient care. Qwen-VL can assist radiologists by identifying anomalies in medical imaging, while Qwen-Audio can transcribe and analyze patient interviews, and OpenSearch can deliver swift, accurate answers to complex medical inquiries.

Smart Education Platforms

Multimodal AI can tailor educational content to individual learning styles. Qwen-Audio can evaluate and give feedback on language pronunciation, Qwen-VL can analyze written assignments, and OpenSearch can provide students with in-depth explanations and study materials.

Efficient Retail Operations

In retail, multimodal AI can create immersive shopping experiences. Customers can use natural language to search for products using voice commands, and Qwen-VL can recommend items based on visual cues, such as colors or styles, from a photo or video.

Legal and Compliance Research

Law firms and compliance departments can leverage multimodal AI to sift through vast amounts of legal documents. Qwen-Agent, powered by OpenSearch, can provide precise legal precedents and relevant case law, streamlining legal research and decision-making.

Conclusion

The convergence of multimodal AI technologies is paving the way for applications that can engage with the world in a human-like manner. The Qwen Family LLMs, each specialized in their domain, represent the building blocks of this intelligent future. With Model Studio as your development hub, the ability to create advanced, intuitive, and responsive AI applications is now at your fingertips.

Embark on this journey with us as we explore the limitless potential of multimodal AI. Stay tuned for "Multimodality Unleashed: Integrating Qwen Family LLMs with Model Studio," the tutorial that will transform the way you think about and implement AI in your projects.

Start your multimodal AI adventure here

Thank you for joining me on this exploration of multimodal AI. Your journey into the next dimension of artificial intelligence starts now.